Now Reading: KiloClaw Targets Shadow AI: Taming Unsanctioned AI Risks

-

01

KiloClaw Targets Shadow AI: Taming Unsanctioned AI Risks

KiloClaw Targets Shadow AI: Taming Unsanctioned AI Risks

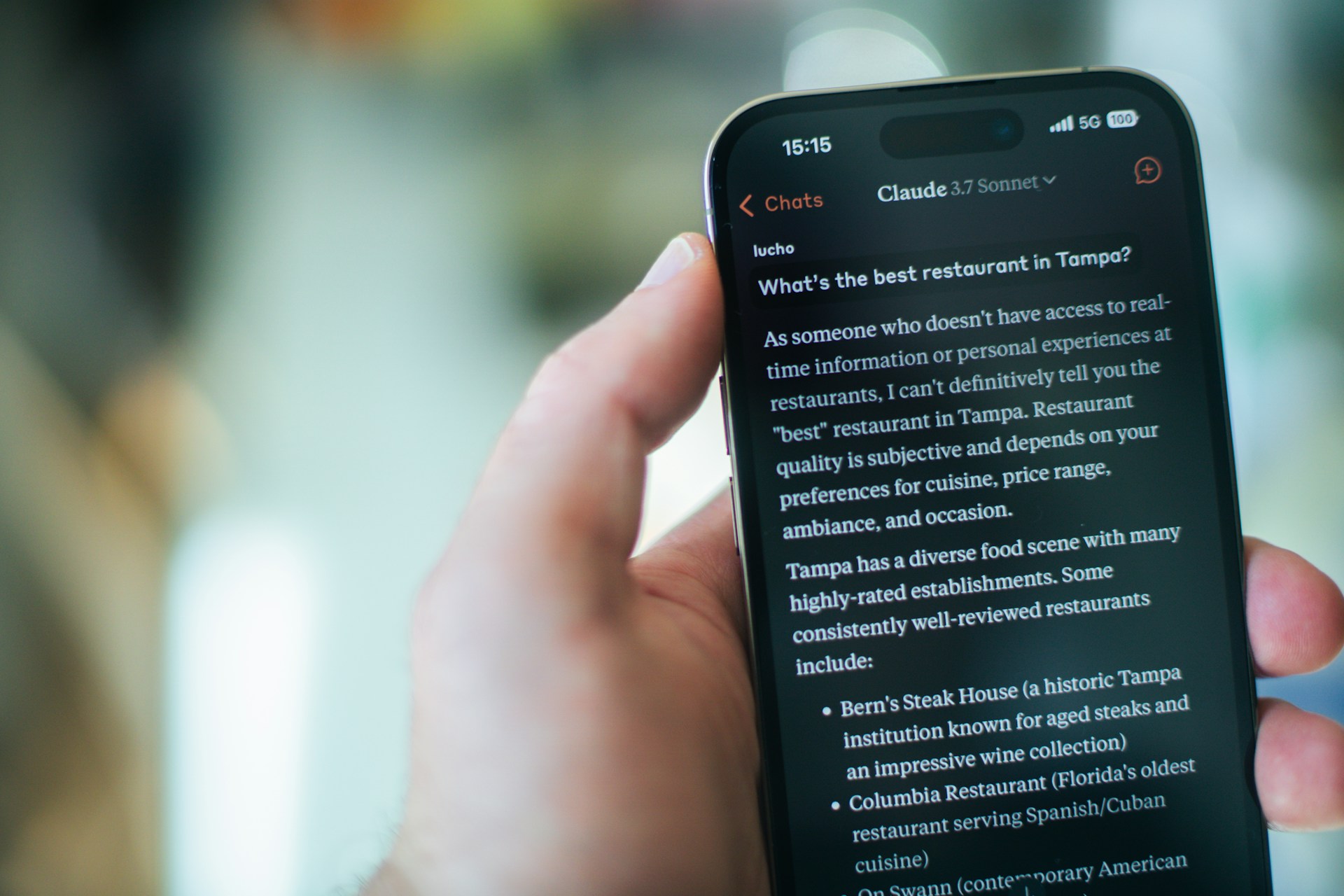

Here’s a statistic that should keep every CISO awake at night: according to a 2024 Gartner survey, more than 55% of enterprise employees have experimented with generative AI tools that were never approved by their IT department. They’re pasting customer data into ChatGPT. They’re uploading proprietary spreadsheets to AI-powered analytics platforms. They’re using code-generation tools that nobody on the security team has ever vetted.

This phenomenon — commonly called “shadow AI” — represents one of the fastest-growing blind spots in enterprise cybersecurity. And a new player in the AI governance space is stepping up with a pointed solution. KiloClaw targets shadow AI head-on, offering organizations a way to detect, catalog, and manage AI tools that employees adopt without official oversight.

In this post, we’ll break down what shadow AI actually is, why it’s so dangerous, and how emerging platforms like KiloClaw are reshaping the way enterprises approach AI governance.

What Exactly Is Shadow AI?

Shadow AI is the artificial intelligence equivalent of shadow IT — a term that’s been haunting enterprise security teams for over a decade. Shadow IT referred to employees spinning up unauthorized cloud services, installing unapproved software, or using personal devices for work. Shadow AI follows the same pattern, but the stakes are dramatically higher.

When someone uploads a confidential contract to an AI summarization tool, that data potentially enters a training pipeline. When a developer pastes proprietary source code into a free code-completion assistant, intellectual property leaks become a real possibility. Unlike a rogue Dropbox account, shadow AI tools actively ingest, process, and sometimes retain the data they receive.

Why It’s Proliferating So Quickly

The explosion of accessible generative AI tools has made shadow AI nearly inevitable. Consider the timeline:

- 2022: ChatGPT launches and reaches 100 million users in two months

- 2023: Thousands of AI-powered SaaS tools emerge across every business vertical

- 2024: Browser extensions, plugins, and embedded AI features make adoption frictionless

Employees aren’t being malicious. They’re trying to be productive. But every unauthorized AI tool they adopt is an unmonitored data pipeline leading outside the organization’s perimeter.

How KiloClaw Targets Shadow AI in the Enterprise

KiloClaw targets shadow AI by functioning as a detection and governance layer that sits between an organization’s network and the broader internet. Think of it as a sophisticated radar system — but instead of scanning for aircraft, it’s identifying AI-related API calls, data transmissions, and application behaviors that indicate unauthorized AI usage.

Discovery and Classification

The platform continuously monitors network traffic and endpoint behavior to identify when employees interact with AI services. It doesn’t just flag known tools like ChatGPT or Midjourney. It uses behavioral analysis to detect novel or lesser-known AI applications based on how they communicate, what data they request, and the patterns of their API interactions.

Risk Scoring and Policy Enforcement

Once a shadow AI tool is detected, KiloClaw assigns a risk score based on several factors:

- Whether the tool’s data retention policies are transparent

- The sensitivity level of the data being transmitted

- The tool’s compliance certifications (SOC 2, GDPR, HIPAA)

- Whether the vendor has a history of data breaches

Administrators can then set automated policies — block high-risk tools outright, allow low-risk tools with logging, or route medium-risk tools through an approval workflow.

Why Traditional Security Tools Fall Short

You might wonder why existing security solutions — firewalls, CASBs, DLP platforms — can’t handle this problem. The answer lies in how AI tools operate compared to traditional SaaS applications.

A conventional cloud access security broker (CASB) can identify when someone logs into Salesforce or uploads a file to Google Drive. But AI tools often operate through browser-based interfaces, API calls embedded in other applications, or lightweight browser extensions that don’t trigger traditional security alerts.

Moreover, many AI interactions look innocuous at the network level. A text prompt submitted to an AI chatbot resembles any other HTTPS POST request. Without contextual understanding of what data is being sent and where, traditional tools simply can’t distinguish between an employee Googling a recipe and an employee feeding sensitive financial projections into an unvetted AI model.

This is precisely the gap that makes it significant when KiloClaw targets shadow AI — the platform was purpose-built for this specific detection challenge rather than adapted from an older paradigm.

Real-World Scenarios Where Shadow AI Creates Havoc

To understand the urgency, consider these plausible (and increasingly common) scenarios:

- The Helpful Marketing Analyst: A marketing team member uploads an entire customer database to an AI-powered segmentation tool to generate audience insights. That database includes names, emails, purchase history, and geographic data — a GDPR nightmare waiting to happen.

- The Ambitious Software Engineer: A developer uses an AI code assistant that isn’t on the approved vendor list. The assistant is trained on code submitted by all users. Three months later, fragments of proprietary algorithms appear in a competitor’s open-source project.

- The Efficient HR Manager: An HR director pastes employee performance reviews into a generative AI tool to draft summary reports. Sensitive personnel evaluations now exist on a third-party server with unknown retention policies.

None of these individuals intended harm. All of them created significant organizational risk.

Practical Steps for Getting Ahead of Shadow AI

Whether or not you adopt a dedicated platform like KiloClaw, here are actionable steps every organization should consider right now:

- Conduct an AI audit: Survey your teams. Ask what tools they’re using. You’ll almost certainly be surprised by the results.

- Create an approved AI tool list: Give employees sanctioned alternatives so they don’t feel compelled to find their own solutions.

- Establish clear usage policies: Define what data can and cannot be entered into AI tools, and communicate these rules frequently.

- Deploy purpose-built monitoring: Traditional security tools weren’t designed for this threat. Invest in solutions specifically engineered for AI governance.

- Train continuously: Security awareness programs should now include dedicated modules on AI data risks, not just phishing and password hygiene.

The Bigger Picture: AI Governance Is No Longer Optional

We’re past the point where AI governance is a “nice to have.” Regulatory frameworks like the EU AI Act, evolving NIST guidelines, and sector-specific compliance requirements are all converging on a simple truth: organizations must know what AI tools are operating within their environments and control how data flows through them.

The fact that KiloClaw targets shadow AI reflects a broader market recognition that this problem demands specialized solutions. Just as the rise of cloud computing eventually spawned an entire category of cloud security tools, the rise of generative AI is spawning a new generation of AI-specific governance platforms.

If your organization hasn’t started addressing shadow AI, consider this your wake-up call. The tools your employees are using today are creating data exposure risks that could surface as regulatory fines, intellectual property losses, or reputation-damaging breaches tomorrow.

Start the conversation internally. Audit your environment. And explore platforms like KiloClaw that are designed from the ground up to bring visibility to the invisible AI sprawl happening across your enterprise.