Now Reading: Buildermark: Open Source Tool to Measure AI-Generated Code

-

01

Buildermark: Open Source Tool to Measure AI-Generated Code

Buildermark: Open Source Tool to Measure AI-Generated Code

Buildermark is a new open source tool designed to help developers and organizations measure how much of their code is AI-generated. As AI coding assistants become ubiquitous, this project addresses growing concerns around transparency, code quality, and governance in software development.

A New Open Source Tool Aims to Bring Transparency to AI-Assisted Development

In an era where AI coding assistants like GitHub Copilot, Cursor, and ChatGPT are reshaping how software gets built, a pressing question has emerged: just how much of the code in a given project was actually written by a human? Buildermark, a newly launched open source tool, is designed to answer exactly that — giving developers and organizations a concrete way to measure the proportion of AI-generated code in their repositories.

The project has already sparked significant discussion in developer communities, highlighting a growing appetite for transparency and accountability in an industry that is rapidly embracing machine-generated output.

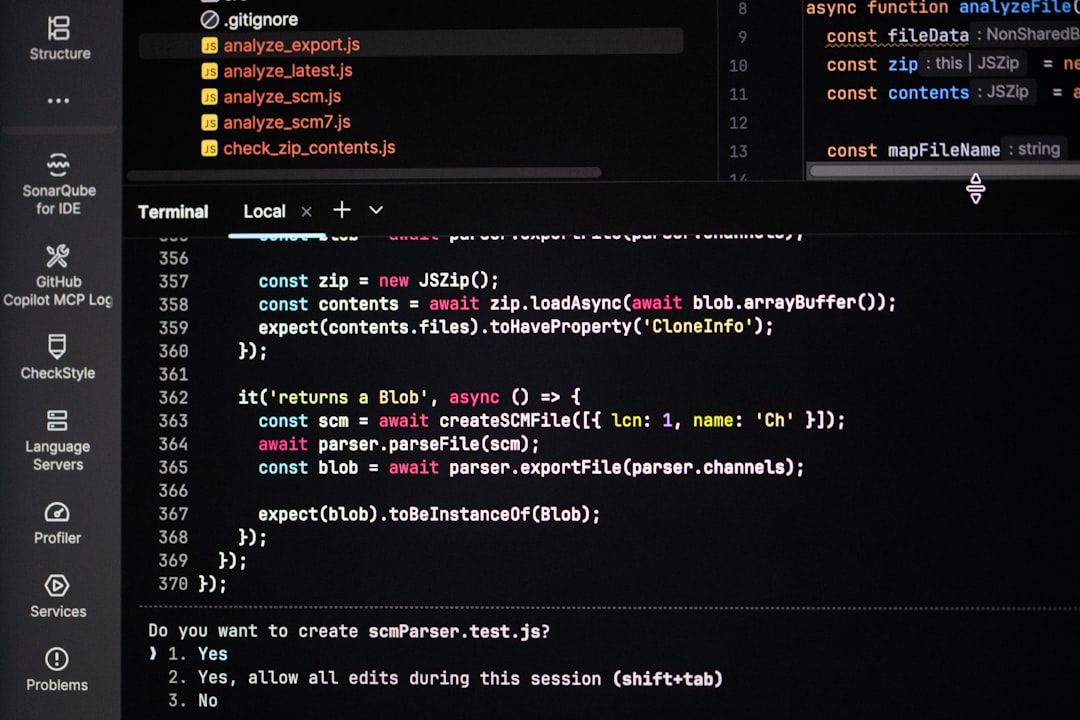

What Buildermark Does and How It Works

At its core, Buildermark provides a mechanism for tracking and quantifying the extent to which artificial intelligence contributed to a codebase. Rather than relying on guesswork or self-reporting, the tool analyzes code to surface data-driven insights about authorship and origin.

Key features of Buildermark include:

- AI contribution measurement: The tool evaluates repositories and estimates what percentage of code was generated by AI tools versus written by human developers.

- Open source availability: Buildermark’s source code is freely available, meaning anyone can inspect, modify, and contribute to the project.

- Developer-friendly integration: It’s built to slot into existing workflows, making adoption straightforward for teams already using version control and CI/CD pipelines.

By making the analysis open and auditable, Buildermark avoids the black-box problem that plagues many proprietary analytics tools. If you’re curious about similar innovations, check out our coverage of Claude for Word: Anthropic’s AI Now Works Natively in Micros for more context on what’s emerging in this space.

Why Measuring AI-Generated Code Matters Now

The timing of Buildermark’s release is no accident. According to a 2024 GitHub survey, over 92% of developers reported using AI coding tools in some capacity, both at work and in personal projects. That’s a staggering adoption curve — and it raises legitimate questions about quality, security, intellectual property, and developer skill development.

For engineering managers and CTOs, understanding how much code in their products originates from AI is no longer a curiosity — it’s a governance concern. Regulatory bodies in the EU, particularly under the evolving EU AI Act, are beginning to explore requirements around transparency in AI-assisted outputs. A tool like Buildermark could become essential compliance infrastructure.

There’s also the talent dimension. If a significant portion of a codebase is machine-generated, organizations need to think carefully about code reviews, testing rigor, and long-term maintainability. AI-written code can introduce subtle bugs and security vulnerabilities that human reviewers might overlook if they assume another human wrote it thoughtfully.

The Bigger Picture: Code Attribution in the Age of Copilot

Buildermark enters a landscape where the boundaries between human and AI authorship are increasingly blurry. Tools like GitHub Copilot, Amazon CodeWhisperer, and a growing roster of AI pair programmers can generate entire functions, classes, and even architectural patterns based on natural language prompts.

This has created an attribution problem. When a developer accepts an AI suggestion, modifies it slightly, and commits it — who wrote that code? The developer? The model? The training data that the model learned from? These questions aren’t purely philosophical. They carry real implications for:

- Open source licensing: If AI-generated code was trained on copylefted repositories, there may be unresolved license obligations.

- Security auditing: Knowing the origin of code helps security teams prioritize what to review most carefully.

- Performance evaluation: Engineering metrics tied to individual output become murky when AI is doing much of the heavy lifting.

- Intellectual property claims: Companies filing patents or protecting trade secrets need clarity on human versus machine contributions.

Buildermark doesn’t solve all of these problems, but it provides the foundational data layer that makes addressing them possible.

What Experts and the Community Are Saying

The developer community response to Buildermark has been enthusiastic, with active discussion threads dissecting its methodology, potential use cases, and limitations. Several commentators have noted that this kind of tooling was inevitable — the surprise is that it took this long to arrive.

Industry analysts have been pointing out for months that organizations need better instrumentation around AI adoption. Gartner and Forrester have both flagged “AI governance in software development” as a top-priority initiative for 2025. Buildermark aligns squarely with that trend.

Some skeptics have raised valid concerns. Measuring what constitutes “AI-generated” code isn’t always clean-cut. A developer might use AI to scaffold a function and then rewrite 80% of it. Does that count as AI-generated or human-written? These edge cases will likely shape how the tool evolves. For related perspectives, our deep dive into Meta Releases Muse Spark: Multimodal Reasoning Model Explain explores how these assistants are changing developer workflows.

What Comes Next for Buildermark

Being open source gives Buildermark a significant advantage in terms of community-driven improvement. Expect contributors to refine its detection heuristics, expand language support, and build integrations with popular platforms like GitHub, GitLab, and Bitbucket.

Looking ahead, several developments seem likely:

- Enterprise adoption: Companies with compliance requirements will be early adopters, particularly in regulated industries like finance and healthcare.

- CI/CD integration: Buildermark could become a standard step in continuous integration pipelines, generating reports alongside test coverage and linting results.

- Benchmarking standards: If widely adopted, the tool could help establish industry benchmarks for acceptable levels of AI-generated code in different contexts.

The project could also inspire a broader ecosystem of code provenance tools — similar to how software bill of materials (SBOM) requirements have driven new tooling in supply chain security.

The Bottom Line

Buildermark addresses a gap that the software industry has been quietly ignoring: the lack of visibility into how much of our collective codebase is being written by machines. As AI coding tools become ubiquitous, the ability to measure, track, and audit machine-generated contributions isn’t just useful — it’s becoming essential.

Whether you’re a solo developer curious about your own AI reliance, a team lead managing code quality, or an executive navigating governance requirements, Buildermark offers a transparent, open source starting point. In a field moving at breakneck speed, that kind of visibility is worth paying attention to.