Now Reading: Build Document Intelligence Pipelines with LangExtract

-

01

Build Document Intelligence Pipelines with LangExtract

Build Document Intelligence Pipelines with LangExtract

Google's LangExtract library enables developers to build advanced document intelligence pipelines that transform unstructured text into structured, source-grounded data using OpenAI models. This guide covers the full workflow from schema design and extraction to interactive visualization and tabular output.

Google’s LangExtract Opens the Door to Scalable Document Intelligence

A new coding workflow has emerged that combines Google’s LangExtract library with OpenAI’s large language models to convert messy, unstructured text into clean, machine-readable datasets. The approach, detailed in a recent technical tutorial, demonstrates how developers can build reusable pipelines capable of parsing contracts, meeting notes, product announcements, and operational logs — all while grounding extracted data to its exact source spans within the original document.

For teams drowning in unstructured information, this represents a significant leap forward. Rather than building bespoke parsers for every document type, LangExtract offers a unified framework where carefully crafted prompts and example annotations guide the model toward consistent, structured output.

What Happened: A Step-by-Step Pipeline for Structured Extraction

The workflow begins with environment setup — installing LangExtract and its dependencies, then securely configuring an OpenAI API key. This configuration allows the pipeline to tap into GPT-class models for the heavy lifting of natural language understanding.

From there, developers define extraction schemas that tell the system exactly what to look for. The beauty of this approach is its flexibility. A single pipeline can be adapted across wildly different document types by swapping out prompt templates and annotation examples. Here’s what the core workflow looks like:

- Schema Definition: Specify the entities, actions, deadlines, risk factors, and other attributes you want to extract from each document category.

- Prompt Engineering: Design prompts with few-shot examples so the model understands the desired output format and level of granularity.

- Extraction Execution: Feed raw text through the LangExtract pipeline, which calls the OpenAI model and returns structured JSON objects tied to source text spans.

- Visualization and Tabulation: Organize extracted data into pandas DataFrames and interactive visual dashboards for downstream analysis.

This last step is particularly noteworthy. By converting extraction results into tabular formats, teams can immediately plug the data into business intelligence tools, compliance dashboards, or automated alerting systems.

Why It Matters: The Unstructured Data Problem Is Massive

Industry analysts estimate that roughly 80% of enterprise data is unstructured — trapped in PDFs, emails, Slack threads, and scanned documents. Traditional approaches to taming this chaos have relied on rule-based parsers or custom-trained NER models, both of which are brittle and expensive to maintain.

Google’s decision to release LangExtract as an open library signals a broader industry trend: commoditizing the extraction layer so that developers can focus on what they do with the data rather than how they get it out. If you’ve been following our coverage of Falcon Perception: TII's 0.6B Early-Fusion Vision Model, you’ll recognize this as part of a larger shift toward LLM-powered tooling that abstracts away traditional NLP complexity.

The integration with OpenAI models is also strategic. While Google’s own AI division offers competing models like Gemini, making LangExtract model-agnostic (or at least compatible with OpenAI’s ecosystem) dramatically widens its potential user base.

Background: Where LangExtract Fits in the Ecosystem

LangExtract isn’t the first library to tackle structured extraction from text. Tools like spaCy, Hugging Face Transformers, and even LangChain’s own extraction utilities have occupied this space for years. What distinguishes LangExtract is its emphasis on source grounding — every extracted entity or attribute is linked back to the exact character span in the original document where it was found.

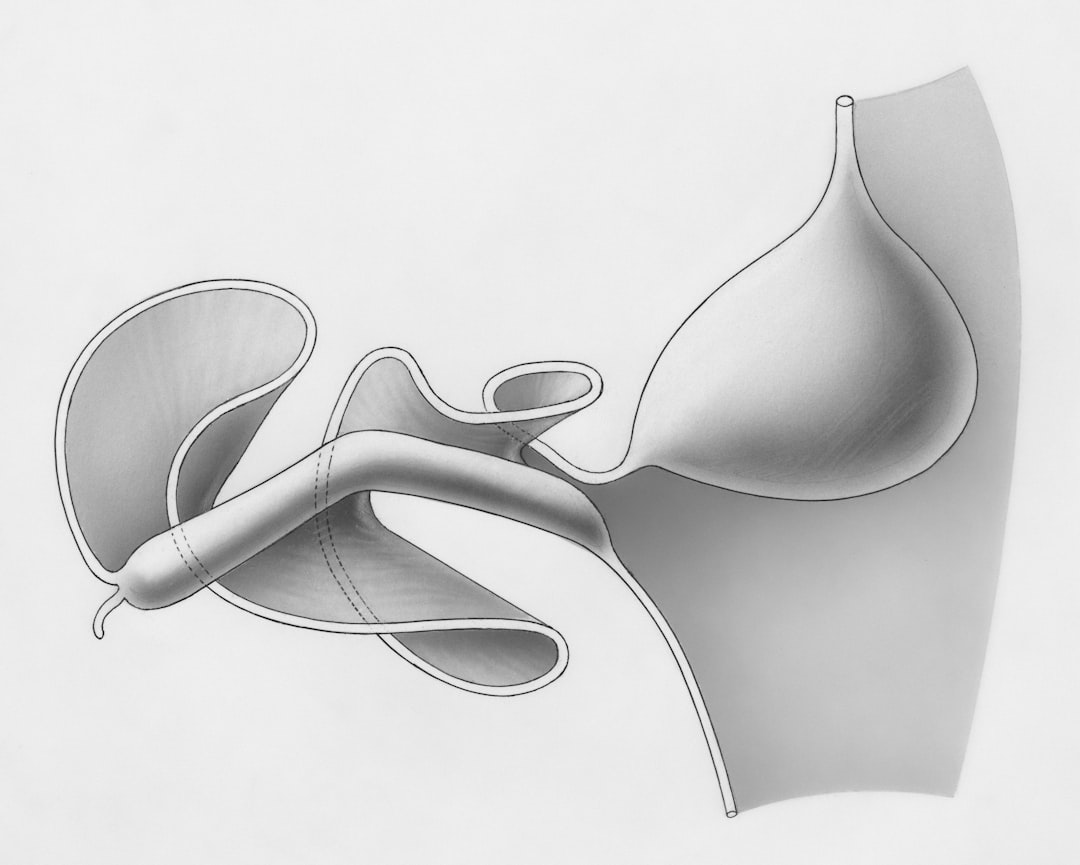

This provenance tracking is critical for high-stakes applications. In legal document review, for instance, knowing that a deadline was extracted from paragraph 14, sentence 3 of a contract isn’t just helpful — it’s a compliance requirement. Similarly, in medical records processing, auditors need to verify that extracted diagnoses trace directly to clinical notes.

For readers interested in the broader landscape of document processing tools, our piece on Build Production-Ready Agentic Systems with Z.AI GLM-5 provides additional context on how these technologies compare.

Expert Perspective: What Analysts Are Saying

The developer community has responded with cautious enthusiasm. On forums and social platforms, engineers have praised LangExtract’s clean API design and the simplicity of its prompt-plus-schema approach. Some have noted, however, that the quality of extraction is still fundamentally bounded by the underlying language model’s capabilities.

This is an important caveat. Hallucination — the tendency of LLMs to fabricate plausible-sounding but incorrect information — remains a risk in any extraction pipeline. The source grounding feature in LangExtract mitigates this to some degree, since extracted spans can be programmatically verified against the original text. But developers should still build validation layers on top of raw extraction output, especially in regulated industries.

As MIT Technology Review has reported extensively, the gap between impressive demos and production-ready AI systems often comes down to exactly this kind of post-processing rigor.

What Comes Next: Building Toward Autonomous Document Workflows

Looking ahead, pipelines like the one demonstrated with LangExtract are likely just the beginning. Several trends suggest where this technology is heading:

- Multi-modal extraction: Combining text extraction with image and table understanding from scanned documents and PDFs.

- Agent-driven workflows: Feeding extracted structured data directly into AI agents that can take actions — filing reports, sending alerts, or updating databases autonomously.

- Fine-tuned domain models: Using LangExtract’s annotation format to generate training data for smaller, faster, domain-specific models that can run on-device without API calls.

The document intelligence market, valued at over $5 billion in 2024 according to various industry reports, is poised for rapid growth as these capabilities mature. Google’s investment in open tooling like LangExtract positions it to capture developer mindshare even as competition intensifies from Microsoft, Amazon, and a wave of well-funded startups.

Key Takeaway

For developers and data teams looking to build robust document intelligence capabilities, LangExtract offers a compelling starting point. Its combination of prompt-driven flexibility, source-grounded extraction, and seamless integration with OpenAI models makes it one of the most practical tools to emerge in the structured extraction space this year. The real value, though, will come from the pipelines teams build around it — validation layers, visualization dashboards, and downstream automation that turn raw extraction into genuine business insight.